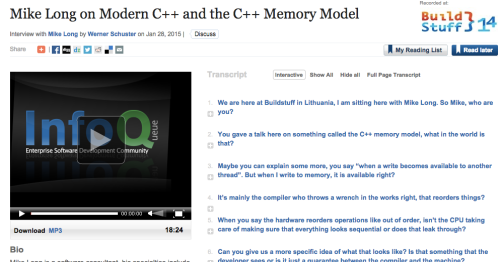

Video – Introducing the C++ Memory Model

The memory model is perhaps one of the most valuable but misunderstood changes in c++11. For the first time, c++ programmers have a language contract with the runtime about how their code will be executed in the face of hardware optimizations, memory hierarchies and multiple threads of execution.

This talk introduces the key concepts in the memory model, and show how these concepts apply to the new atomic primitives in c++11.

Filed under Uncategorized

Long Life Software slides

Here are the slides for the talk I gave at the ACCU 2014 conference.

This is the abstract:

Civil engineers build structures to last. Aerospace engineers build airplanes for the long haul. Automotive engineers build cars to last. How about software engineers?

Not all of software needs to be engineered for long-life, but in some systems the predicted market span dictates we plan for the future. How can we do this, given the uncertainties in the technology industry? What can we learn from the past? How can we take informed bets on technologies and plan for change?

This session will cover some of the important technical considerations to make when thinking about the long term.

Filed under Uncategorized

Large Legacy Software Restoration

Hi folks.

As most of you know, I have been working for some time in a team committed to turning around a large legacy software project. It is a lot of fun to be involved in such a challenge, and I have found it to be a great learning experience. In the meantime, I have been taking notes and sharing what I’ve learned in the process at various conferences. I am in the process of distilling this into a book which I hope will help others get a head-start into what works and what doesn’t work in the large legacy context.

I am looking to connect to others facing similar legacy challenges, so we can share our wins and our battle stories in a supportive community. If you are interested in being involved, please sign up on the large legacy mailing list. Let the discussion begin!

Thanks,

Mike

p.s. Please forward this to anyone you might think is interested!

Filed under Uncategorized

10x Codebases

There has been talk of 10x developers for as long as there has been software engineering.

I have had the good fortune to work with some profoundly great engineers, and it is fantastic.

But do you know what is much more motivating? 10x codebases.

The codebase you can’t fuck up because you have tests covering your ass.

The codebase you can add new features to without breaking step, because it was thoughtfully designed with change in mind.

The codebase you don’t have to wait for, because the build, test, packaging and deployment are automatic and rock solid.

Is the 10x developer a myth? Who cares. But we have all worked on 0.1x codebases. Do the math.

Filed under Uncategorized

Christopher Alexander

In my life as an architect, I find that the single thing which inhibits young professionals, new students most severely, is their acceptance of standards that are too low. If I ask a student whether her design is as good as Chartres, she often smiles tolerantly at me as if to say, “Of course not, that isn’t what I am trying to do. . . . I could never do that.”

Then, I express my disagreement, and tell her: “That standard must be our standard. If you are going to be a builder, no other standard is worthwhile. That is what I expect of myself in my own buildings, and it is what I expect of my students.” Gradually, I show the students that they have a right to ask this of themselves, and must ask this of themselves. Once that level of standard is in their minds, they will be able to figure out, for themselves, how to do better, how to make something that is as profound as that.

Two things emanate from this changed standard. First, the work becomes more fun. It is deeper, it never gets tiresome or boring, because one can never really attain this standard. One’s work becomes a lifelong work, and one keeps trying and trying. So it becomes very fulfilling, to live in the light of a goal like this. But secondly, it does change what people are trying to do. It takes away from them the everyday, lower-level aspiration that is purely technical in nature, (and which we have come to accept) and replaces it with something deep, which will make a real di ff erence to all of us that inhabit the earth.

Christopher Alexander, Forward to Patterns of Software (pdf available here)

Filed under Uncategorized

Conway’s Second Law

In software, so much of our history can be traced back to this man, Melvin E. Conway.

In 1968, he wrote what became a classic paper in our cannon, How Do Committees Invent? This was to be the first exploration of how organizational structure effects the technical outcomes of projects. The most quoted line of the paper is in its conclusion:

“organizations which design systems … are constrained to produce designs which are copies of the communication structures of these organizations”

It later became to be known as Conway’s Law. Like all good “laws”, it is still as valid today as is was when it was first articulated. I don’t want to explore organizational structures here, however there is another illuminating but lesser known quote from the paper that I want to highlight:

“This point of view has produced the observation that there’s never enough time to do something right, but there’s always enough time to do it over”

Here Dr. Conway is talking about how systematic top-down design processes break down in the face of the uncertainty of discovering a software design. This breakdown causes us to “abondon our creations” with predictable regularity.

But how often do we get the opportunity to do our software over these days? Is it really common to throw away our projects and start again? How much time do we spend re-implementing systems compared to extending the systems we have?

Filed under Uncategorized

Cleaning Code – Tools and Techniques for Large Legacy Projects

Here are the slides from the presentation I gave at the ACCU conference this year:

Filed under Uncategorized

Prefactoring re-coined

Two pieces of input struck me this week that caused a bit of a brainwave. The first stimulus I chanced upon was Michael Feathers’ article on the sloppiness of refactoring, in which he proposes a nice solution to getting the habit of refactoring to completion: do it first.

I like this idea a lot, and I want to introduce it in to the working agreement (how I hate that term) of our team. This would solve a lot of forgotten refactorings that seem to only be remembered at retrospective time.

The second thing that I chanced upon is The Mikado Method book. In it the authors propose a method for improving legacy code that begins with determining the dependency graph for the mini-tasks in the implementation, then starting the work at the leaves of the graph (these are the most independent areas of change in task).

What both these approaches essentially give us is a pre-refactoring step in implementation. I was so excited by both these commalities that caused me to coin the term Prefactoring. Imagine my dismay at learning that not only Prefactoring was already coined as a term in our lexicon, but also that there was even a book written with that title. Unfortunately it means something entirely different…

Filed under Uncategorized

Global Day of Coderetreat 2012 – Beijing, China

Beijing will participate in Global Day of Coderetreat again this year! Sign up here: http://www.meetup.com/BeijingSoftwareCraftsmanship/events/90858762/